The terraform import command allows you to import into HashiCorp Terraform resources that already existed previously in the provider we are working with, in this case AWS. However, it only allows you to import those records one by one, with one run of terraform import at a time. This, apart from being extremely tedious, in some situations becomes impractical. This is the case for the records of a Route53 DNS zone. The task can become unmanageable if we have multiple DNS zones, each one with tens or hundreds of records. In this article I offer you a bash script that will allow you to import in Terraform all the records of a Route53 DNS zone in a matter of seconds or a few minutes.

Script to automatically change all gp2 volumes to gp3 with aws-cli

Last December Amazon announced its new EBS gp3 volumes, which offer better performance and a cost saving of 20% compared to those that have been used until now (gp2). Well, after successfully testing these new volumes with multiple clients, I can do nothing but recommend their use, because they are all advantages and in these 2 and a half months that have passed since the announcement I have not noticed any problems or side effects.

How to automatically update all your AWS EC2 security groups when your dynamic IP changes

One of the biggest annoyances when working with AWS and your Internet connection has a dynamic IP is that when it changes, you immediately stop accessing to all servers and services protected by an EC2 security group whose rules only allow traffic to certain specific IP’s instead of allowing open connections to everyone (0.0.0.0.0/0).

15 Tips and Tools for Successful Remote Working after Covid-19

There are many people and companies that due to the coronavirus crisis (Covid-19) are being forced to adopt different forms of remote working these days. As an architect of cloud solutions (Cloud Computing) and freelance system administrator I have been working this way successfully for many years, so some of them are asking me during the last days advice on what strategies to follow and what useful applications exist to manage to telecommuting efficiently. That is why I decided to go a step further and write this article in which I compile a series of recommendations and tools that I hope will help many people who are forced to perform their work remotely from home in these new coronavirus era. However I also hope all those people and companies that see an opportunity in all this and choose to bet definitely for remote work, either partially or fully, will find it useful too.

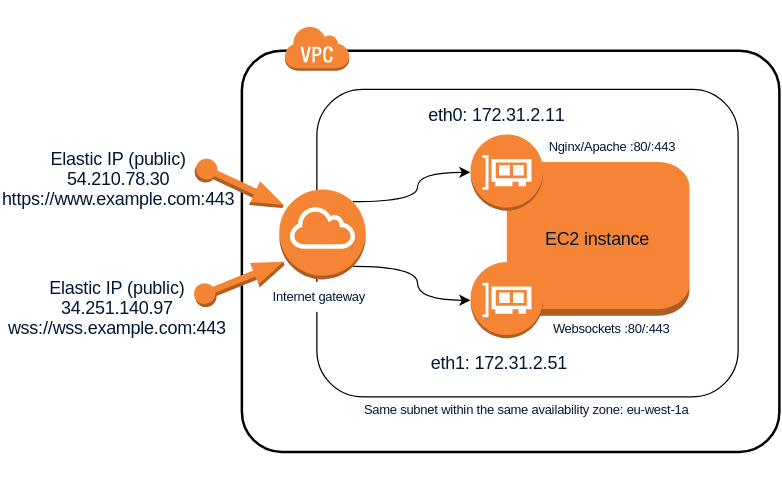

How to use 2 network interfaces on the same AWS subnet in Linux

The following Linux procedure describes how to use at the same time 2 network interfaces connected to the same AWS subnet and, which is more important, how to make both communication works well internally (between hosts on the same subnet) and also externally (both interfaces visible from the Internet). This can be useful for example when you want the same EC2 instance to host a web server serving http or https requests and at the same time have a websockets server ws:// or wss:// listening on the same port 80 or 443 respectively. Although there are other ways to achieve this such as configuring Nginx to be able to discriminate web traffic (http) from websockets traffic (ws) and act as a proxy to redirect the corresponding requests to the websockets server, this other solution I propose seems simpler and to some extent more efficient because it is not necessary to redirect traffic, which will always introduce a small latency, and allows to keep both servers completely independent within the same host. The only drawback is that you will need to assign 2 Elastic IP addresses to the same EC2 instance instead of only 1, but at the same time this will give you more flexibility when establishing rules in the security groups or in the subnet NAT rules.

How to perform MySQL/MariaDB backups: mysqldump command examples

Although there are different methods for backing up MySQL and MariaDB databases, the most common and effective one is to use a native tool that both MySQL and MariaDB make available for this purpose: the mysqldump command. As its name suggests, this is a command-line executable program that allows you to perform a complete export (dump) of all the contents of a database or even all the databases in a running MySQL or MariaDB instance. Of course it also allows partial backups, i.e. only some specific tables, or even only only a subset of all the records in a table.

The mysqldump command offers a multitude of different parameters that make it very powerful and flexible. Since having so many options can be confusing, in this post I am going to collect several of the most frequent usage examples with the most common parameters and that are most useful in the day to day life of the system administrator.

Linux remote control from your smartphone via SSH button widgets

In this post I will tell you about an Android app that is extremely useful to run commands remotely on a Linux computer: Hot Button SSH Command Widget. This application allows you to launch conveniently any command you want on a remote computer through SSH only with the push of a button on the screen of your mobile phone or tablet. This not only will facilitate automation of repetitive tasks, but also is very interesting from the perspective of security for the same reasons I exposed in my Automatically lock/unlock your screen by Bluetooth device proximity post. It will allow you for example to lock and unlock the screen without having to type your password again and again in sight of other people.

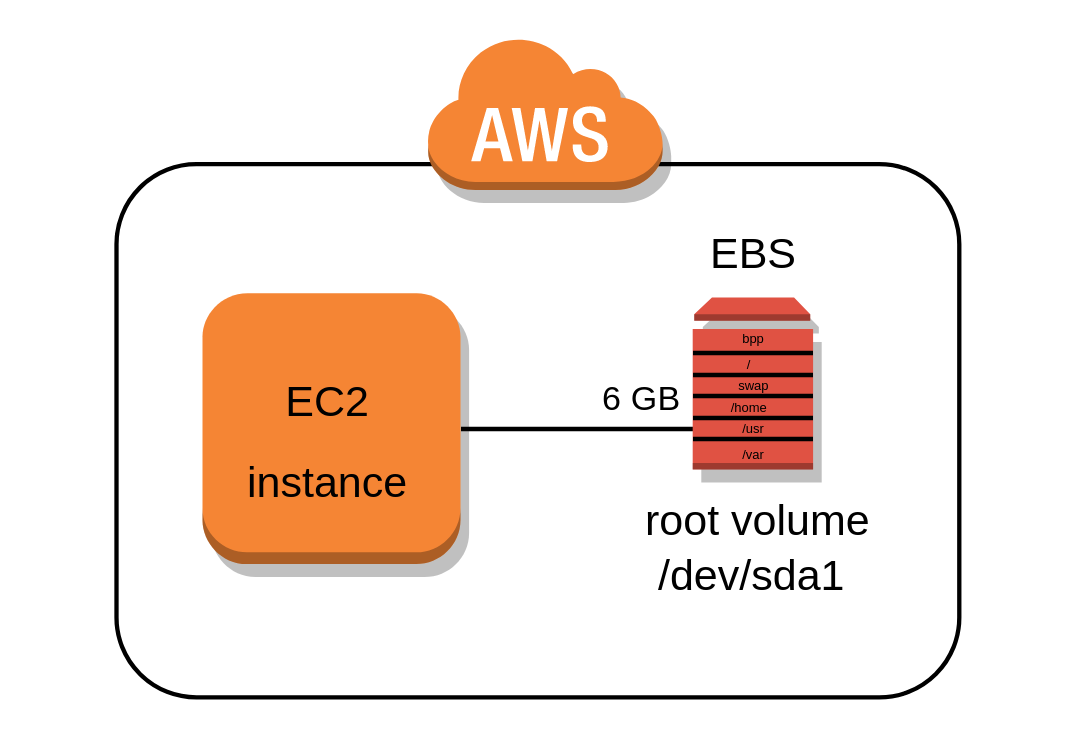

Partitioning and resizing the EBS Root Volume of an AWS EC2 Instance

One of the few things I do not like about the AWS EC2 service is that all available images (AMIs) used to to launch new instances require a root volume of at least 8 or 10 GB in size and all of them also have a single partition where the root filesystem is mounted on.

In my post The importance of properly partitioning a disk in Linux I discussed why in my opinion this approach is not appropriate and now I will address in a practical way how to divide those volumes into multiple partitions keeping the 8-10 GB base size or making them even smaller to save costs in case you want to deploy smaller servers that do not need as much storage space.